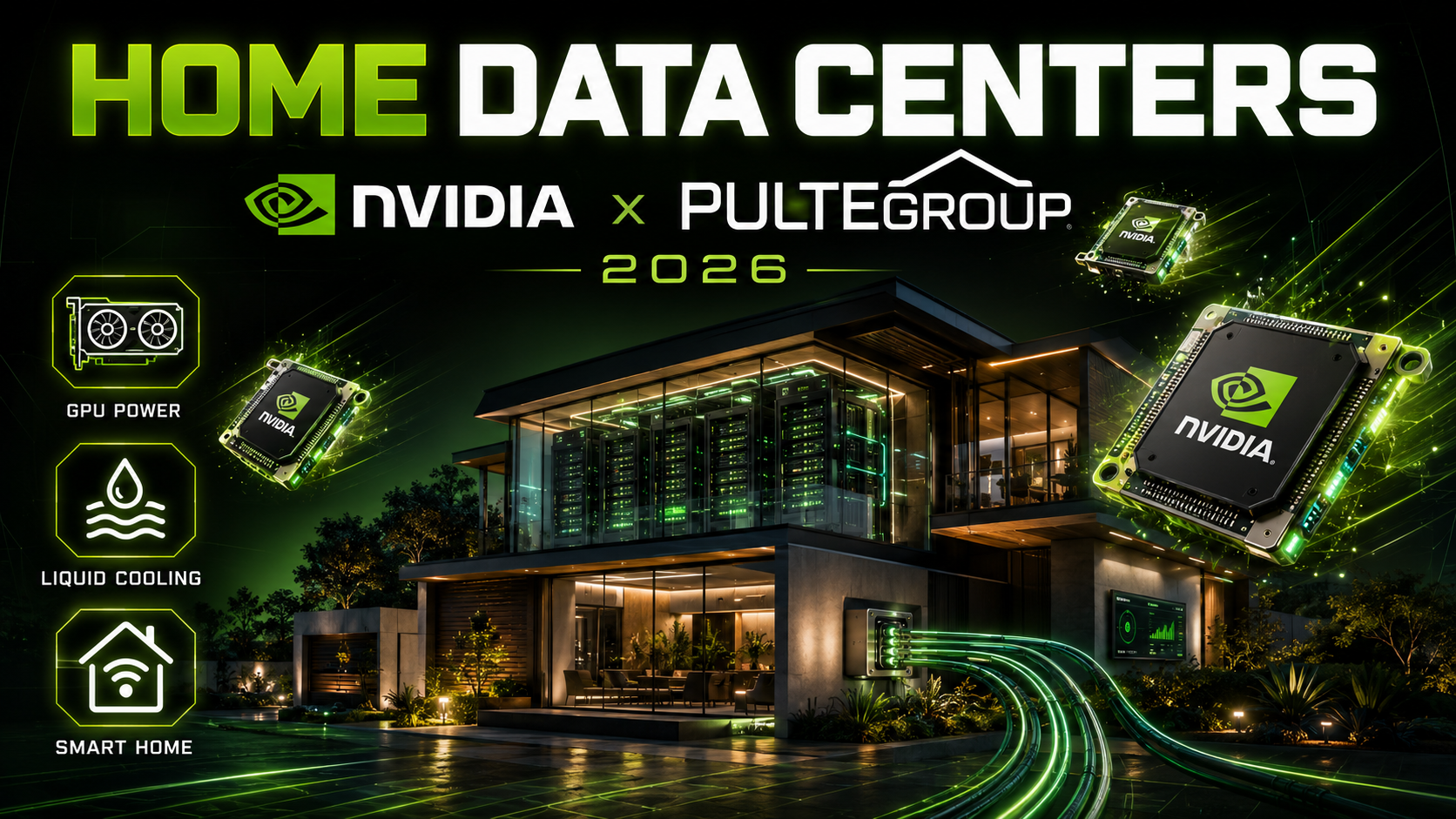

Home Data Centers in 2026: Nvidia and PulteGroup Turn Houses Into AI Compute Nodes

Home data centers could change everything. What if your house didn’t just consume electricity — it earned money by running AI workloads? That’s the pitch behind home data centers — one of the wildest infrastructure plays of 2026. Nvidia, America’s third-largest homebuilder PulteGroup, and smart panel startup Span have partnered to deploy mini data centers inside individual homes. The units run on liquid-cooled Nvidia Blackwell GPUs, make no noise, and could slash your electricity and internet bills to zero.

It sounds like science fiction. But PulteGroup is already testing the technology in new home communities, and the economics might actually work. Here’s how your next house could double as an AI compute node — and why this matters for the future of data center infrastructure.

Why Home Data Centers Solve AI’s Power Crisis

The AI industry has a massive infrastructure problem. Global AI data center spending could hit $7 trillion by 2030, and every major tech company is racing to build more compute capacity. But there are two enormous bottlenecks: power availability and public opposition.

Traditional hyperscale data centers consume hundreds of megawatts of electricity — equivalent to powering small cities. The demand is so intense that data center operators are buying nuclear reactors, bidding for offshore wind farms, and even exploring floating ocean platforms to find enough power.

Meanwhile, communities across the United States are increasingly pushing back against new data center construction. Nobody wants a massive, humming facility consuming their local grid capacity, driving up electricity prices, and lowering property values. Zoning battles and NIMBYism are slowing data center deployments in major markets like Northern Virginia, Phoenix, and Dallas.

Enter Span’s radical solution: instead of building a few massive data centers, distribute the compute across thousands of individual homes. Each home uses only a fraction of its available electrical capacity, so the unused power can run AI workloads without requiring any new grid infrastructure.

Home Data Centers: What Is XFRA Technology and How Does It Work?

XFRA is Span’s distributed data center solution, announced in April 2026. The system places compact compute nodes in homes and small businesses, turning unused residential electrical capacity into processing power for hyperscalers and AI cloud providers.

Each XFRA node is a self-contained compute unit that includes:

Hardware: Dell PowerEdge servers featuring 16 Nvidia RTX Pro 6000 Blackwell Server Edition GPUs, 4 AMD EPYC CPUs, and 3 TB of RAM. These are the same class of GPUs used in professional data centers — liquid-cooled, high-performance silicon designed for AI inference and training workloads.

Cooling: The XFRA units use liquid cooling rather than fans. This means they produce virtually no noise — a critical requirement for residential deployment. You won’t hear your house running AI workloads.

Power management: Each node integrates with Span’s smart electrical panel, which monitors the home’s energy consumption in real time. The system only draws power when the home isn’t using its full capacity. According to Span, average residential homes operate at just 40% of their peak power capacity, leaving significant headroom for compute workloads.

Connectivity: XFRA nodes connect to the broader compute network via the home’s existing internet connection, supplemented by dedicated connectivity where needed.

The complete installation includes Span’s smart electrical panel, the XFRA compute unit, a home backup battery, and in some cases solar panels. For homeowners, the installation is free — Span covers the cost in exchange for access to the unused electrical capacity.

PulteGroup’s Pilot Program

PulteGroup, one of the nation’s largest homebuilders with approximately 31,000 homes delivered annually, is the first major builder to test the concept. The company has deployed XFRA units in a handful of new home communities and is actively assessing the economics and operational capabilities.

The pilot is currently in its early stages. Span plans a larger proof of concept in Q3 2026, deploying 100 nodes in new residential construction homes in a southwestern state — likely Nevada or Arizona, where grid capacity and new housing construction are both abundant.

For PulteGroup, the appeal is straightforward: homes with built-in XFRA nodes could be marketed as “income-generating properties” that lower or eliminate utility costs. In a housing market where affordability is the top concern for buyers, a home that pays for its own electricity and internet is a powerful selling point.

What Homeowners Get

The homeowner economics are where this gets interesting. If your home hosts an XFRA node, Span compensates you for the energy and internet bandwidth the system uses. The compensation comes in the form of heavily discounted or completely free electricity and internet service.

Think about that for a moment. The average American household spends roughly $2,000-$3,000 per year on electricity and internet combined. If XFRA eliminates those costs entirely, that’s the equivalent of a significant annual income boost — essentially passive revenue from your home’s unused electrical capacity.

The system also includes a home backup battery, which provides emergency power during outages. And the smart electrical panel gives homeowners detailed visibility into their energy consumption patterns, potentially enabling additional savings through optimized usage.

Span emphasizes that homeowners maintain full control. The compute workloads running on XFRA nodes are managed remotely and don’t affect the homeowner’s electricity, internet speed, or quality of life. If the home needs its full electrical capacity — during a heat wave or when hosting a large gathering — the XFRA node can be throttled or temporarily shut down.

The Economics: 80% Cheaper Than Traditional Data Centers

The financial case for distributed home data centers is compelling on paper. According to Span, a traditional 100 MW data center costs roughly $15 million per megawatt and takes three to five years to build. By contrast, Span claims it can match that capacity by deploying XFRA nodes across 8,000 new homes in approximately six months at just $3 million per megawatt.

That’s an 80% cost reduction and a dramatically faster deployment timeline. The speed advantage comes from the fact that the electrical infrastructure already exists — every home has a utility connection, and most homes have significant unused capacity. No new substations, no new transmission lines, no multi-year construction permits.

The approach also sidesteps the NIMBYism problem entirely. There’s no massive facility to oppose, no zoning battle to fight, and no community pushback to manage. Each home contributes a small amount of compute, but the aggregate across thousands of homes adds up to meaningful data center capacity.

Nvidia’s Strategic Interest

Nvidia’s involvement is significant. The company is providing its latest Blackwell-generation GPUs — specifically the RTX Pro 6000 Server Edition — for XFRA nodes. This represents one of the first deployments of liquid-cooled Blackwell GPUs in a residential setting.

For Nvidia, distributed home data centers represent an entirely new market for its GPUs. If XFRA scales to thousands or millions of homes, that’s a massive new revenue stream beyond traditional data center customers. Nvidia’s $40 billion AI investment strategy clearly extends to supporting novel deployment models like this.

The partnership also aligns with Nvidia’s broader push to democratize AI infrastructure. Rather than concentrating compute in a handful of massive hyperscale facilities, distributing it across millions of homes creates a more resilient, geographically diverse compute network.

The Skeptics’ Case

Not everyone is convinced. There are legitimate concerns about the distributed home data center model:

Reliability. Data centers achieve 99.999% uptime through redundant power, cooling, and networking. A compute node sitting in someone’s garage doesn’t have that redundancy. Power outages, internet disruptions, and hardware failures at individual homes could make the distributed network less reliable than centralized alternatives.

Security. Placing AI compute hardware in thousands of private homes raises security questions. Physical access to servers is harder to control in a residential setting than in a secured data center facility. The systems would need robust encryption and tamper detection to prevent unauthorized access.

Latency. Residential internet connections, even fast ones, can’t match the dedicated fiber and low-latency networking in purpose-built data centers. For AI workloads that require tight coordination between thousands of GPUs — like training large language models — distributed home nodes may not be suitable.

Heat and wear. Running 16 GPUs continuously in a residential structure, even with liquid cooling, generates heat and puts wear on the home’s electrical system. Long-term effects on home infrastructure, insurance implications, and maintenance responsibilities remain unclear.

Scale limitations. Even with 8,000 homes providing 100 MW of capacity, that’s modest compared to hyperscale data centers that are being built at 500 MW to 1 GW scales. Distributed home compute may complement traditional data centers rather than replace them.

The Bigger Picture: $7 Trillion in AI Infrastructure

The PulteGroup pilot exists within a staggering context. Global AI data center spending is projected to reach $7 trillion by 2030. Big Tech companies alone have committed roughly $725 billion in capital expenditure for 2026, almost entirely for data centers, custom chips, and AI infrastructure.

This level of spending is creating an arms race for power. Companies are buying nuclear power plants, exploring floating data centers, and even investigating orbital compute satellites. Against that backdrop, tapping into the distributed electrical capacity of millions of American homes seems almost elegant in its simplicity.

If just 1% of America’s roughly 140 million housing units hosted XFRA nodes, that would represent 1.4 million compute nodes distributed across the country. At 19.2 kW per node, that’s approximately 27 gigawatts of distributed compute capacity — more than all existing US data centers combined.

That’s the dream, at least. Whether the reality matches the math remains to be seen.

What Comes Next

The immediate next step is Span’s Q3 2026 proof of concept: 100 XFRA nodes deployed in new PulteGroup homes in the Southwest. If that pilot succeeds — demonstrating reliable uptime, satisfied homeowners, and cost-effective compute delivery — expect rapid expansion to other homebuilders and existing home retrofits.

Span has publicly stated its target of 1 GW of annual capacity by 2027. Achieving that would require deploying nodes in roughly 50,000 homes — ambitious but not impossible given PulteGroup’s annual construction volume of 31,000 homes and the availability of other major builders.

For homebuyers, the question is simple: would you host an AI data center in your home in exchange for free electricity and internet? If the answer is yes — and for many budget-conscious buyers it will be — then the future of AI infrastructure might not be a warehouse in the desert. It might be the house next door.

Economics of Home Data Centers in 2026

The business model behind home data centers is home data centers is straightforward but revolutionary. Homeowners allow a compact, liquid-cooled Nvidia Blackwell GPU rack — the XFRA Nvidia home GPU unit — to be installed in a utility closet or garage. The unit draws between 5-15 kW of power and connects to fiber internet already present in PulteGroup’s new construction communities as part of the PulteGroup data center pilot program.

With home data centers, homeowners receive monthly payments ranging from $200 to $800 depending on utilization. According to CNBC’s analysis, a typical participant in Arizona or Texas could see net annual returns of $3,600 to $7,200 after energy costs. The home data centers heat management solution is the breakthrough — XFRA’s liquid cooling captures waste heat and redirects it into the home’s hot water system. Data Center Knowledge reports this heat recapture eliminates 60-80% of the energy cost.

The International Energy Agency estimates global data center energy consumption will triple by 2030. Distributed home data centers address edge computing needs that don’t require hyperscale GPU clusters. Utility Dive notes utilities are cautiously optimistic because distributed loads are more manageable. This complements Nvidia’s $40 billion AI investment portfolio strategy of owning every layer of the AI stack. The Cloudflare AI layoffs and EU AI Act regulations show the broader industry context. The Cerebras IPO presents alternative chip architectures, while the AI agents tutorial explains the software that runs on this distributed infrastructure.